AI readiness is not a technology decision. It is an organizational one. The contact center that waits for AI and the one that deployed AI without a plan are heading toward the same outcome. Both lose ground to the organization that built the foundation first

AI is living in your competitors’ workflows right now. In their contact center knowledge bases, escalation queues, and agent desktops. According to McKinsey, 88% of organizations now use AI in at least one business function, yet fewer than one in five use it to improve contact center workflows. The leaders pulling ahead in 2026 are not the most aggressive deployers; they are the most deliberate ones. This article gives you 10 direct questions organized across four domains. Read each section. Check what your organization has answered. Leave knowing exactly where the gaps are.

HOW TO READ THE PRIORITY TAGS

| TAG | WHEN | WHAT IT MEANS |

|---|---|---|

| DO NOW | Before you deploy anything | Non-negotiable prerequisites. If these are not in place, every AI investment built on top is at risk. Stop here before anything else. |

| 90-DAY | Within your first quarter | Not prerequisites but close. Delay past 90 days and customer trust, compliance exposure, and brand risk become real problems. |

| 2026 BUILD | Over the next 12–18 months | Your competitive moat. The metrics and frameworks that separate CX leaders who got AI right from those who just got AI live. |

01 FOUNDATION: Before you build anything

Two decisions made before you select a vendor will determine whether your AI project succeeds or quietly fails. Gartner research shows 60% of AI-ready projects are abandoned due to poor data readiness. Most organizations skip both decisions and discover this the hard way.

Is your CX data AI-ready?

Here is what most vendors will not tell you: their model is only as good as what you feed it. Fragmented conversation history, inconsistent CSAT codes, siloed customer profiles, and vague resolution labels produce AI that reflects your contact center’s worst habits back to your customers at scale.

Run a data readiness audit before you sign anything. Not during onboarding. Before. Map every data source your AI will touch, assess its quality and completeness, and document the gaps explicitly. This is not a technical task. It is a strategic one. The audit tells you how long it will really take to go live and what the AI will actually be working with when it does.

Have you defined “great resolution” before automating it?

Automation does not improve outcomes. It locks them in. If your best agents resolve a billing dispute in a certain way, AI can scale that. If your process is inconsistent, AI scales the inconsistency.

Before any AI touches a customer interaction, your team needs a documented answer to a deceptively simple question: what does excellent look like for each of your top ten contact drivers? Not deflection. Not handle time. Resolution quality. Write it down. Make it specific. That document becomes your evaluation rubric, your training reference, and your ongoing measurement standards.

This matters most for first contact resolution. FCR is the metric your customers feel most directly, and the one AI can either dramatically improve or silently destroy. Medavie Blue Cross achieved a 6% FCR improvement after implementing structured knowledge flows with Procedureflow. That kind of outcome is only possible when the definition of a good resolution is established before the AI is deployed, not after.

| # | Checklist Item | TAG |

|---|---|---|

| 1 | CX data is structured, accessible, and clean | DO NOW |

| 2 | “Great resolution” is defined per contact driver | DO NOW |

FOUNDATION READINESS SIGNALS

| SIGNAL | WHAT TO CHECK |

|---|---|

| Conversation history | Is it accessible, structured, and complete enough to train on? |

| CSAT and resolution codes | Are they applied consistently across channels and teams? |

| Customer profile data | Is it unified, or does each system hold a different version? |

| Contact driver definitions | Do you have documented quality outcomes per driver? |

02 GOVERNANCE: Trust as a design principle

Customer trust is the asset your AI deployment is either building or eroding with every interaction. Governance is not a compliance checkbox. It is a product design.

Are your AI escalation paths explicitly not assumed?

Ask your team this question today: when AI cannot resolve a customer’s issue, exactly what happens? If the answer involves pauses, qualifications, or phrases like “it should route to” your escalation path is not designed. It is assumed.

The moment a customer repeats themselves to a human agent after an AI interaction, you have created a trust deficit that the rest of the journey cannot recover. Design the handoff as carefully as you design the AI interaction itself. Specify what context transfers, what the agent sees, and what the customer does not have to say again.

Whether your contact center runs Salesforce, NiCE CXone, or another CCaaS platform, the escalation path works the same way: the agent assists layer picks up exactly where the AI left off. That continuity is not automatic. It has to be designed. The organizations that get this right treat escalation design as a product decision, not an IT ticket.

Do you have a clear AI transparency policy for customers?

This is simpler than most legal teams make it. One requirement: when a customer sincerely asks whether they are speaking with AI, they receive a truthful answer. Every time. Without exception.

The strategic case for transparency is stronger than the compliance case. Research consistently shows that customers who know they are using AI and have a good experience report higher satisfaction than customers who discover mid-interaction they were not speaking with a human. Honesty is not a brand risk. Perceived deception is.

Are legal and compliance teams involved before go-live?

In regulated industries financial services, healthcare, insurance, utilities AI that gives incorrect advice does not just disappoint customers. It creates liability. And in 2026, regulators are actively examining AI-generated customer communications.

Bring compliance early. Not to slow the project down to identify the specific use cases that need guardrails before they go live. A brief review up front is worth months of remediation.

| # | Checklist Item | TAG |

|---|---|---|

| 3 | AI escalation paths are explicitly designed and tested | 90-DAY |

| 4 | AI transparency policy exists and is consistently applied | 90-DAY |

| 5 | Legal and compliance reviewed before customer-facing deployment | 90-DAY |

COST OF SKIPPING GOVERNANCE

| SKIPPED STEP | CONSEQUENCE |

|---|---|

| No escalation design | Customers repeat themselves. Trust degrades immediately and silently. |

| No transparency policy | Perceived deception when customers discover AI mid-interaction. |

| No legal review | Liability exposure in regulated industries often discovered post-incidents. |

| No compliance check | High-risk use cases go live without guardrails or fallback. |

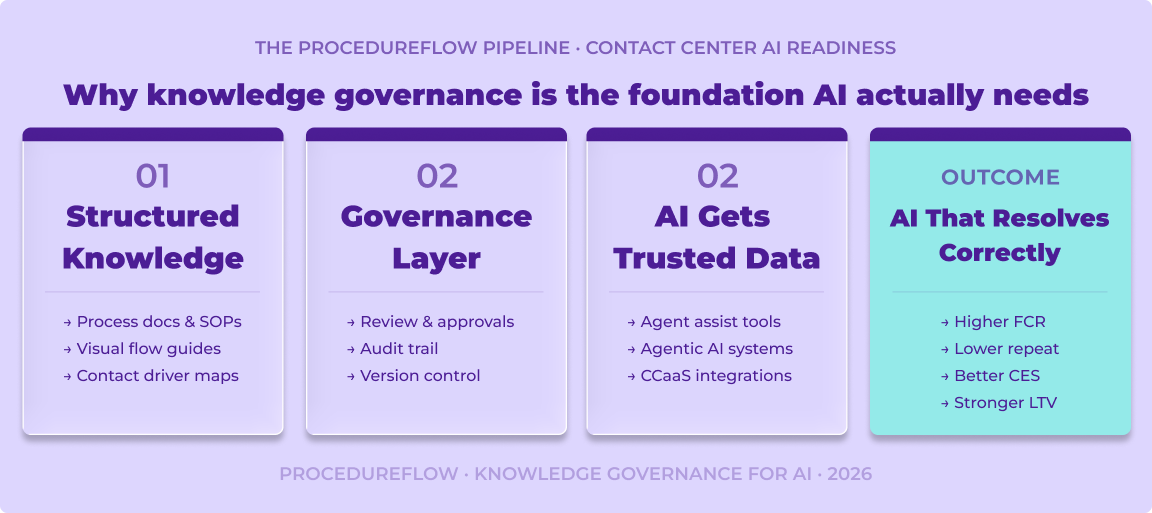

Why knowledge governance is the foundation AI actually needs

Every governance decision in this section depends on one thing: the quality of the knowledge your AI is operating from. Adobe’s 2026 AI and Digital Trends Report found that fewer than half of organizations have adequate data quality and accessibility for AI and only 44% have a measurement framework in place for generative AI. The gap between ambition and readiness is almost always a knowledge problem, not a technology problem.

Procedureflow’s approach to knowledge governance for AI treats process documentation as a living system. When your AI is guided by accurate, structured, and consistently maintained knowledge, it resolves correctly. When it is guided by fragmented, outdated, or inconsistent documentation, it amplifies those inconsistencies at scale. Governance is what keeps the knowledge accurate. Knowledge is what keeps the AI trustworthy.

For contact center leaders specifically, this means: your AI is not a standalone deployment. It is a function of your knowledge infrastructure. The organizations outperforming their competitors on AI CX metrics in 2026 are almost universally the ones that streamlined their process workflows before they turned on the AI.

03 PEOPLE: Your agents are your moat

The fastest way to undermine an AI deployment is to introduce it without your frontline team. Their institutional knowledge is the competitive advantage your AI either amplifies or ignores.

Agent anxiety around AI is not irrational, and it is not just a change management problem. It is a signal. It tells you that your contact center organization has not yet answered the most important question your frontline staff are asking: what does my job look like when AI is here? Lorikeet’s research across hundreds of CX leaders found that change management is consistently one of the weakest readiness dimensions organizations that involve agents early to see adoption rates more than double those that do not.

Do your agents see AI as a tool, not a threat?

Share your AI roadmap with your frontline team before it is finalized. Not as a communication exercise as a design input. The agents handling your highest-complexity, highest-emotion customer interactions know things about your customers that no model has been trained on.

Involve them in pilots. Let them surface the edge cases. Let them flag the interactions where AI gets it wrong. Then show them that their feedback changed something. The organizations seeing the fastest AI adoption in 2026 are the ones that made agents co-authors of the AI experience, not recipients of a rollout announcement.

Are AI-augmented roles redefined in job descriptions and scorecards?

When AI resolves every Tier 1 contact, what exactly is a contact center agent doing? If your answer is “handling the escalations” you have a role description, not a career.

Update the job description. Update the career path. Update the scorecard. Replace volume KPIs with quality metrics: resolution accuracy, customer effort score, escalation satisfaction. A high performer in an AI-first contact center is a different kind of professional. Treat them accordingly or your best people leave before the AI is fully deployed.

| # | Checklist Item | TAG |

|---|---|---|

| 6 | Agents are briefed, involved in pilots, and given a voice in design | DO NOW |

| 7 | AI-augmented roles have updated descriptions, paths, and scorecards | 2026 BUILD |

AGENTS IN AN AI-FIRST CONTACT CENTER: WHAT CHANGES

| OLD SCOPE | NEW SCOPE |

|---|---|

| Tier 1 query resolution | Complex, emotionally nuanced resolution only |

| Following scripts | Coaching AI on edge cases and exception patterns |

| Volume-based KPIs | Quality, customer effort score, and LTV-linked KPIs |

| Static job descriptions | Living roles updated alongside AI capability |

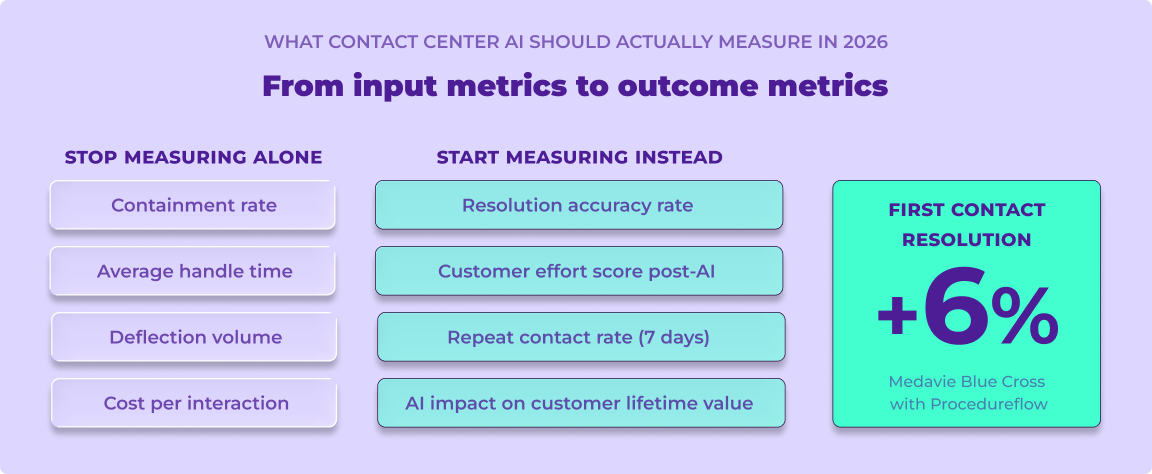

04 MEASUREMENT: Outcomes over inputs

Containment rate is the most commonly reported AI metric in CX. It is also the most misleading one. An AI that deflects volume is not the same as an AI that resolves problems.

Organizations that track containment rate without accuracy validation are incentivizing their AI to close conversations, not solving them. Adobe’s 2026 research found that only 31% of organizations have a measurement framework for agentic AI meaning most contact centers are flying blind on whether their AI is actually working. Customers whose issues are not resolved simply call back, email again, or churn quietly. None of that shows up in your deflection dashboard.

Are you measuring AI quality, not just AI volume?

Four metrics tell you what containment rate cannot. Resolution accuracy rate did the AI actually solve the problem? Customer effort score post-AI** how much did the customer have to work? Agent override rate how often are agents correcting the AI’s output? Repeat contact rate within seven days, did the resolution hold?

Is there a feedback loop from AI failures to the business?

Every AI failure is information. A misrouted ticket tells you something about intent classification. An incorrect response tells you something about your knowledge base. Build the pipeline. Connect AI error logs to product, operations, and knowledge teams. The best CX organizations in 2026 treat AI misses as the most valuable data they collect because that is where the improvement headroom lives.

Are you connecting AI performance to customer lifetime value?

Cost reduction is a legitimate outcome. It should not be the primary one. Build the model that links AI resolution quality to customer retention and lifetime value. Once you can show that connection, your AI investment stops being a cost line and becomes a revenue line. That is the conversation that changes how the entire organization thinks about CX.

To learn how Procedureflow helps contact center teams build the knowledge foundation AI needs, visit the features page. You can also explore how Procedureflow integrates with contact center platforms like NiCE CXone to deliver real-time visual guidance to agents.

| # | Checklist Item | TAG |

|---|---|---|

| 8 | AI quality scorecard tracks accuracy, effort, override, and repeat contact | DO NOW |

| 9 | AI failure feedback loop runs from error logs to product and ops teams | 2026 BUILD |

| 10 | AI resolution quality is modeled against retention and lifetime value | 2026 BUILD |

FROM INPUT METRICS TO OUTCOME METRICS

| STOP MEASURING ALONE | START MEASURING INSTEAD |

|---|---|

| Containment rate | Resolution accuracy rate |

| Average handle time | Customer effort scores post-AI |

| Deflection volume | Repeat contact rate within 7 days |

| Cost per interaction | AI impact on customer lifetime value |

Score yourself. Then act.

The checklist above covers what no vendor pitch deck will tell you the data, governance, people, and measurement foundations that determine whether AI becomes a competitive advantage or a customer relationship liability.